hyper-resolution flood hazard mapping at the national scale

Günter Blöschl, Andreas Buttinger-Kreuzhuber, Daniel Cornel, Julia Eisl, Michael Hofer, Markus Hollaus, Zsolt Horváth, Jürgen Komma, Artem Konev, Juraj Parajka, Norbert Pfeifer, Andreas Reithofer, José Salinas, Peter Valent, Roman Výleta, Jürgen Waser, Michael H. Wimmer, Heinz Stiefelmeyer

Hyper-Resolution Flood Hazard Mapping at the National Scale,

In Natural Hazards and Earth System Sciences, 24(6), pages 2071-2091, 2024.

Abstract: Flood hazard mapping is currently in a transitional phase involving the use of data and methods that were traditionally in the domain of local studies in a regional or nationwide context. Challenges include the representation of local information such as hydrological particularities and small hydraulic structures, as well as computational and labour costs. This paper proposes a methodology of flood hazard mapping that merges the best of the two worlds (local and regional studies) based on experiences in Austria. The analysis steps include (a) quality control and correction of river network and catchment boundary data; (b) estimation of flood discharge peaks and volumes on the entire river network; (c) creation of a digital elevation model (DEM) that is consistent with all relevant flood information, including riverbed geometry; and (d) simulation of inundation patterns and velocities associated with a consistent flood return period across the entire river network. In each step, automatic methods are combined with manual interventions in order to maximise the efficiency and at the same time ensure estimation accuracy similar to that of local studies. The accuracy of the estimates is evaluated in each step. The study uses flood discharge records from 781 stations to estimate flood hazard patterns of a given return period at a resolution of 2 m over a total stream length of 38 000 km. It is argued that a combined local–regional methodology will advance flood mapping, making it even more useful in nationwide or global contexts.

Link: Publisher's version

Download: BibTeX citation

hora 3d: personalized flood risk visualization as an interactive web service

Silvana Rauer-Zechmeister, Daniel Cornel, Bernhard Sadransky, Zsolt Horváth, Artem Konev, Andreas Buttinger-Kreuzhuber, Raimund Heidrich-Ressnik, Günter Blöschl, Eduard Gröller, Jürgen Waser

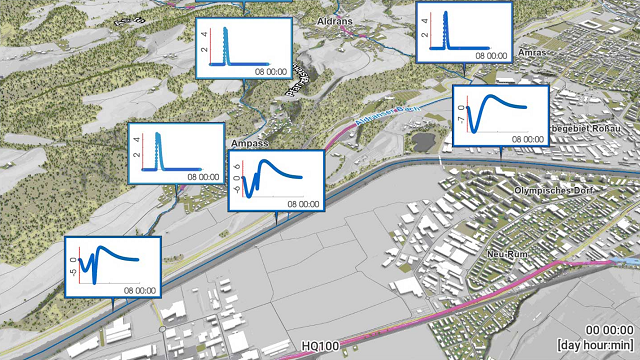

HORA 3D: Personalized Flood Risk Visualization as an Interactive Web Service,

In Computer Graphics Forum (Proceedings EuroVis 2024), 43(3), article no. e15110, 12 pages, 2024. Best Paper Award.

Abstract: We propose an interactive web-based application to inform the general public about personal flood risks. Flooding is the natural hazard affecting most people worldwide. Protection against flooding is not limited to mitigation measures, but also includes communicating its risks to affected individuals to raise awareness and preparedness for its adverse effects. Until now, this is mostly done with static and indiscriminate 2D maps of the water depth. These flood hazard maps can be difficult to interpret and the user has to derive a personal flood risk based on prior knowledge. In addition to the hazard, the flood risk has to consider the exposure of the own house and premises to high water depths and flow velocities as well as the vulnerability of particular parts. Our application is centered around an interactive personalized visualization to raise awareness of these risk factors for an object of interest. We carefully extract and show only the relevant information from large precomputed flood simulation and geospatial data to keep the visualization simple and comprehensible. To achieve this goal, we extend various existing approaches and combine them with new real-time visualization and interaction techniques in 3D. A new view-dependent focus+context design guides user attention and supports an intuitive interpretation of the visualization to perform predefined exploration tasks. HORA 3D enables users to individually inform themselves about their flood risks. We evaluated the user experience through a broad online survey with 87 participants of different levels of expertise, who rated the helpfulness of the application with 4.7 out of 5 on average.

Download: PDF file (29.3 MB)

Download: Supplemental material - Quick Start Guide (1.7 MB)

Download: Supplemental material - Evaluation data (3.4 MB)

Download: BibTeX citation

analytical assessment of street-level tree canopy in austrian cities: identification of re-naturalisation potentials of the urban fabric

Milena Vuckovic, Johanna Schmidt, Daniel Cornel

Analytical Assessment of Street-Level Tree Canopy in Austrian Cities: Identification of Re-Naturalisation Potentials of the Urban Fabric,

In Nature-Based Solutions, 3, article no. 100062, 11 pages, 2023.

Abstract: Climate change-related impacts such as heat stress, extreme weather events, and their consequences are particularly felt within densely built-up urban areas. Urban re-naturalisation is recognised as a promising cost-effective strategy for improving urban resilience to these environmental and societal issues. In this respect, the present contribution focuses on a comprehensive city-wide assessment of street-level urban tree canopy to detect the existing vegetation gaps and identify re-naturalisation potentials within the urban fabric for the three largest cities in Austria (Vienna, Graz, and Linz). The study relies on QGIS and R software environments to carry out spatial data analytics on georeferenced tree cadastre data and the global map of Local Climate Zones (LCZ) to compute spatial density maps and urban structure parameters. The results suggest a rather random distribution of tree clusters and highly fragmented instances within the city. This fragmentation is predominantly observed within central densely built urban fabric for all three cities, with the absence of street trees in peripheral urbanised areas being more prominent in Graz and Linz. The computed percentage of potentially disconnected areas in terms of the tree canopy for Vienna, Graz, and Linz amounts to 45 %, 54 %, and 37 %, respectively. The analysis of urban structure revealed a homogeneous densely built-up character over central districts and a dominantly heterogeneous character across non-central and peripheral districts with a more openly built fabric (this heterogeneity is, generally more prominent in the case of Graz and Linz). In general, there are numerous opportunities for urban re-naturalisation, focusing on urban trees within such openly arranged buildings. Due to the evident physical constraints of the urban space within central districts, the consideration of building-level greening (e.g. green roofs, green facades) may be a better approach to urban re-naturalisation in these cases. Given the further constraints imposed by the abundance of historic buildings, such measures may not be feasible in every event. Hence, this calls for consideration of novel, innovative and emerging greening systems with the proposition of movable vertical green screens and vertical gardens.

Download: PDF file (7.8 MB)

Download: BibTeX citation

watertight incremental heightfield tessellation

Daniel Cornel, Silvana Zechmeister, Eduard Gröller, Jürgen Waser

Watertight Incremental Heightfield Tessellation,

In IEEE Transactions on Visualization and Computer Graphics, 29(9), pages 3888-3899, 2023.

Abstract: In this paper, we propose a method for the interactive visualization of medium-scale dynamic heightfields without visual artifacts. Our data fall into a category too large to be rendered directly at full resolution, but small enough to fit into GPU memory without pre-filtering and data streaming. We present the real-world use case of unfiltered flood simulation data of such medium scale that need to be visualized in real time for scientific purposes. Our solution facilitates compute shaders to maintain a guaranteed watertight triangulation in GPU memory that approximates the interpolated heightfields with view-dependent, continuous levels of detail. In each frame, the triangulation is updated incrementally by iteratively refining the cached result of the previous frame to minimize the computational effort. In particular, we minimize the number of heightfield sampling operations to make adaptive and higher-order interpolations viable options. We impose no restriction on the number of subdivisions and the achievable level of detail to allow for extreme zoom ranges required in geospatial visualization. Our method provides a stable runtime performance and can be executed with a limited time budget. We present a comparison of our method to three state-of-the-art methods, in which our method is competitive to previous non-watertight methods in terms of runtime, while outperforming them in terms of accuracy.

Download: PDF file (8.1 MB)

Download: Supplemental material - Source code and binaries (8.14 MB)

Download: IEEE VIS 2022 PowerPoint slides (661 MB)

Download: BibTeX citation

an integrated gpu-accelerated modeling framework for high-resolution simulations of rural and urban flash floods

Andreas Buttinger-Kreuzhuber, Artem Konev, Zsolt Horváth, Daniel Cornel, Ingo Schwerdorf, Günter Blöschl, Jürgen Waser

An Integrated GPU-Accelerated Modeling Framework for High-Resolution Simulations of Rural and Urban Flash Floods,

In Environmental Modelling & Software, 156, article no. 105480, 15 pages, 2022.

Abstract: This paper presents an integrated modeling framework aiming at accurate predictions of flood hazard from heavy rainfalls. The accuracy of such predictions generally depends on the complexity and resolution of the employed model components. We propose an integration of complementary models in one framework that facilitates GPUs to improve accuracy and simulation time. The spatially distributed runoff model integrates surface flow routing based on the full shallow water equations, infiltration based on the Green–Ampt equation, and interception. In urban areas, the runoff model is coupled with the Storm Water Management Model (SWMM). The integrated model is validated and tested on laboratory, rural and urban scenarios with regards to accuracy and computational efficiency. The GPU acceleration yields speedups of 1000 times compared to a CPU implementation and enables the coupled simulation of flash floods at 1 m resolution for an urban area of 200 km² in realtime.

Link: Publisher's version

Download: BibTeX citation

locally relevant high-resolution hydrodynamic modeling of river floods at the regional scale

Andreas Buttinger-Kreuzhuber, Jürgen Waser, Daniel Cornel, Zsolt Horváth, Artem Konev, Michael H. Wimmer, Jürgen Komma, Günter Blöschl

Locally Relevant High-Resolution Hydrodynamic Modeling of River Floods at the Regional Scale,

In Water Resources Research, 58(7), article no. e2021WR030820, 22 pages, 2022.

Abstract: This paper deals with the simulation of inundated areas for a region of 84,000 km² from estimated flood discharges at a resolution of 2 m. We develop a modeling framework that enables efficient parallel processing of the project region by splitting it into simulation tiles. For each simulation tile, the framework automatically calculates all input data and boundary conditions required for the hydraulic simulation on-the-fly. A novel method is proposed that ensures regionally consistent flood peak probabilities. Instead of simulating individual events, the framework simulates effective hydrographs consistent with the flood quantiles by adjusting streamflow at river nodes. The model accounts for local effects from buildings, culverts, levees, and retention basins. The two-dimensional full shallow water equations are solved by a second-order accurate scheme for all river reaches in Austria with catchment sizes over 10 km², totaling 33,380 km. Using graphics processing units (GPUs), a single NVIDIA Titan RTX simulates a period of 3 days for a tile with 50 million wet cells in less than 3 days. We find good agreement between simulated and measured stage–discharge relationships at gauges. The simulated flood hazard maps also compare well with local high-quality flood maps, achieving critical success index scores of 0.6–0.79.

Link: Publisher's version

Download: BibTeX citation

an improved triangle encoding scheme for cached tessellation

Bernhard Kerbl, Linus Horváth, Daniel Cornel, Michael Wimmer

An Improved Triangle Encoding Scheme for Cached Tessellation,

In Eurographics 2022 – Short Papers, pages 53-56, 2022.

Abstract: With the recent advances in real-time rendering that were achieved by embracing software rasterization, the interest in alternative solutions for other fixed-function pipeline stages rises. In this paper, we revisit a recently presented software approach for cached tessellation, which compactly encodes and stores triangles in GPU memory. While the proposed technique is both efficient and versatile, we show that the original encoding is suboptimal and provide an alternative scheme that acts as a drop-in replacement. As shown in our evaluation, the proposed modifications can yield performance gains of 40 % and more.

Download: PDF file (2.2 MB)

Download: BibTeX citation

hochwasserrisikozonierung austria 3.0

Günter Blöschl, Jürgen Waser, Andreas Buttinger-Kreuzhuber, Daniel Cornel, Julia Eisl, Michael Hofer, Markus Hollaus, Zsolt Horváth, Jürgen Komma, Artem Konev, Juraj Parajka, Norbert Pfeifer, Andreas Reithofer, José Salinas, Peter Valent, Alberto Viglione, Michael H. Wimmer, Heinz Stiefelmeyer

HOchwasserRisikozonierung Austria 3.0 (HORA 3.0),

In Österreichische Wasser- und Abfallwirtschaft, 12 pages, 2022.

Abstract: The present article summarises the strategy and methodological steps of the HORA 3.0 project, in which flood risk zones have been calculated for all of Austria. The analysis steps include: quality control and correction of the stream network and the catchment boundaries; estimation of flood peaks and volumes of a given return period; establishment of a digital elevation model that matches all relevant flood information, including river bed geometry; unsteady, two-dimensional simulations of flood inundation patterns with consistent return periods. In each step, automatic and manual methods are combined in order to map the local hydrological and hydraulic conditions as accurately as possible in a nationwide project. The flood risk zones with a resolution of 2 m for a total river length of 32,000 km have already been published on the HORA platform (www.hora.gv.at). The methodologies developed can be used for further projects, e.g. for visualizations, damage evaluations and in the future for the mapping of pluvial flood hazards.

Link: Publisher's version - PDF file (2.9 MB)

Download: BibTeX citation

an attempt of adaptive heightfield rendering with complex interpolants using ray casting

Daniel Cornel, Zsolt Horváth, Jürgen Waser

An Attempt of Adaptive Heightfield Rendering with Complex Interpolants Using Ray Casting,

In arXiv e-prints, article no. abs/2201.10887, 9 pages, 2022.

Abstract: In this technical report, we document our attempt to visualize adaptive heightfields with smooth interpolation using ray casting in real time. The performance of ray casting depends strongly on the used interpolant and its efficient evaluation. Unfortunately, analytical solutions for ray-surface intersections are only given in the literature for very few simple, piece-wise polynomial surfaces. In our use case, we approximate the heightfield with radial basis functions defined on an adaptive grid, for which we propose a two-step solution: First, we reconstruct and discretize the currently visible portion of the surface with smooth approximation into a set of off-screen buffers. In a second step, we interpret these off-screen buffers as regular heightfields that can be rendered efficiently with ray casting using a simple bilinear interpolant. While our approach works, our quantitative evaluation shows that the performance depends strongly on the complexity and size of the heightfield. Real-time performance cannot be achieved for arbitrary heightfields, which is why we report our findings as a failed attempt to use ray casting for practical geospatial visualization in real time.

Download: PDF file (12.1 MB)

Download: BibTeX citation

combining 2d and 3d visualization with visual analytics in the environmental domain

Milena Vuckovic, Johanna Schmidt, Thomas Ortner, Daniel Cornel

Combining 2D and 3D Visualization with Visual Analytics in the Environmental Domain,

In Information, 13(1), article no. 7, 21 pages, 2022.

Abstract: The application potential of Visual Analytics (VA), with its supporting interactive 2D and 3D visualization techniques, in the environmental domain is unparalleled. Such advanced systems may enable an in-depth interactive exploration of multifaceted geospatial and temporal changes in very large and complex datasets. This is facilitated by a unique synergy of modules for simulation, analysis, and visualization, offering instantaneous visual feedback of transformative changes in the underlying data. However, even if the resulting knowledge holds great potential for supporting decision-making in the environmental domain, the consideration of such techniques still have to find their way to daily practice. To advance these developments, we demonstrate four case studies that portray different opportunities in data visualization and VA in the context of climate research and natural disaster management. Firstly, we focus on 2D data visualization and explorative analysis for climate change detection and urban microclimate development through a comprehensive time series analysis. Secondly, we focus on the combination of 2D and 3D representations and investigations for flood and storm water management through comprehensive flood and heavy rain simulations. These examples are by no means exhaustive, but serve to demonstrate how a VA framework may apply to practical research.

Download: PDF file (17.0 MB)

Download: BibTeX citation

integrated simulation and visualization for flood management

Daniel Cornel, Andreas Buttinger-Kreuzhuber, Jürgen Waser

Integrated Simulation and Visualization for Flood Management,

In Special Interest Group on Computer Graphics and Interactive Techniques Conference Talks (SIGGRAPH ’20 Talks), article no. 55, 2 pages, 2020.

Download: PDF file (1.8 MB)

Download: BibTeX citation

current european flood-rich period exceptional compared with past 500 years

Günter Blöschl, Andrea Kiss, Alberto Viglione, Mariano Barriendos, Oliver Böhm, Rudolf Brázdil, Denis Coeur, Gaston Demarée, Maria Carmen Llasat, Neil Macdonald, Dag Retsö, Lars Roald, Petra Schmocker-Fackel, Inês Amorim, Monika Bělínová, Gerardo Benito, Chiara Bertolin, Dario Camuffo, Daniel Cornel, Radosław Doktor, Líbor Elleder, Silvia Enzi, João Carlos Garcia, Rüdiger Glaser, Julia Hall, Klaus Haslinger, Michael Hofstätter, Jürgen Komma, Danuta Limanówka, David Lun, Andrei Panin, Juraj Parajka, Hrvoje Petrić, Fernando S. Rodrigo, Christian Rohr, Johannes Schönbein, Lothar Schulte, Luís Pedro Silva, Willem H. J. Toonen, Peter Valent, Jürgen Waser, Oliver Wetter

Current European Flood-Rich Period Exceptional Compared with Past 500 Years,

In Nature, 583, pages 560-566, 2020.

Abstract: There are concerns that recent climate change is altering the frequency and magnitude of river floods in an unprecedented way. Historical studies have identified flood-rich periods in the past half millennium in various regions of Europe. However, because of the low temporal resolution of existing datasets and the relatively low number of series, it has remained unclear whether Europe is currently in a flood-rich period from a long-term perspective. Here we analyse how recent decades compare with the flood history of Europe, using a new database composed of more than 100 high-resolution (sub-annual) historical flood series based on documentary evidence covering all major regions of Europe. We show that the past three decades were among the most flood-rich periods in Europe in the past 500 years, and that this period differs from other flood-rich periods in terms of its extent, air temperatures and flood seasonality. We identified nine flood-rich periods and associated regions. Among the periods richest in floods are 1560–1580 (western and central Europe), 1760–1800 (most of Europe), 1840–1870 (western and southern Europe) and 1990–2016 (western and central Europe). In most parts of Europe, previous flood-rich periods occurred during cooler-than-usual phases, but the current flood-rich period has been much warmer. Flood seasonality is also more pronounced in the recent period. For example, during previous flood and interflood periods, 41 per cent and 42 per cent of central European floods occurred in summer, respectively, compared with 55 per cent of floods in the recent period. The exceptional nature of the present-day flood-rich period calls for process-based tools for flood-risk assessment that capture the physical mechanisms involved, and management strategies that can incorporate the recent changes in risk.

Link: Publisher's version

Download: BibTeX citation

interactive visualization of simulation data for geospatial decision support

Daniel Cornel

Interactive Visualization of Simulation Data for Geospatial Decision Support,

PhD thesis, TU Wien, 134 pages, 2020. EuroVis Best PhD Dissertation Award 2021.

Abstract: Floods are catastrophic events that claim thousands of human lives every year. For the prediction of these events, interactive decision support systems with integrated flood simulation have become a vital tool. Recent technological advances made it possible to simulate flooding scenarios of unprecedented scale and resolution, resulting in very large time-dependent data. The amount of simulation data is further amplified by the use of ensemble simulations to make predictions more robust, yielding high-dimensional and uncertain data far too large for manual exploration. New strategies are therefore needed to filter these data and to display only the most important information to support domain experts in their daily work. This includes the communication of results to decision makers, emergency services, stakeholders, and the general public. A modern decision support system has to be able to provide visual results that are useful for domain experts, but also comprehensible for larger audiences. Furthermore, for an efficient workflow, the entire process of simulation, analysis, and visualization has to happen in an interactive fashion, putting serious time constraints on the system.

In this thesis, we present novel visualization techniques for time-dependent and uncertain flood, logistics, and pedestrian simulation data for an interactive decision support system. As the heterogeneous tasks in flood management require very diverse visualizations for different target audiences, we provide solutions to key tasks in the form of task-specific and user-specific visualizations. This allows the user to show or hide detailed information on demand to obtain comprehensible and aesthetic visualizations to support the task at hand. In order to identify the impact of flooding incidents on a building of interest, only a small subset of all available data is relevant, which is why we propose a solution to isolate this information from the massive simulation data. To communicate the inherent uncertainty of resulting predictions of damages and hazards, we introduce a consistent style for visualizing the uncertainty within the geospatial context. Instead of directly showing simulation data in a time-dependent manner, we propose the use of bidirectional flow maps with multiple components as a simplified representation of arbitrary material flows. For the communication of flood risks in a comprehensible way, however, the direct visualization of simulation data over time can be desired. Apart from the obvious challenges of the complex simulation data, the discrete nature of the data introduces additional problems for the realistic visualization of water surfaces, for which we propose robust solutions suitable for real-time applications. All of our findings have been acquired through a continuous collaboration with domain experts from several flood-related fields of work. The thorough evaluation of our work by these experts confirms the relevance and usefulness of our presented solutions.

Download: PDF file (42 MB)

Download: BibTeX citation

comparison of fast shallow-water schemes on real-world floods

Zsolt Horváth, Andreas Buttinger-Kreuzhuber, Artem Konev, Daniel Cornel, Jürgen Komma, Günter Blöschl, Sebastian Noelle, Jürgen Waser

Comparison of Fast Shallow-Water Schemes on Real-World Floods,

In Journal of Hydraulic Engineering, 146(1), article no. 05019005, 16 pages, 2020.

Abstract: Two-dimensional shallow-water schemes on Cartesian grids are amendable for graphics processing units and thus a convenient choice for fast flood simulations. A comparison of recent schemes and validation of important use cases is essential for developers and practitioners working with flood simulation tools. In this paper, we discuss three state-of-the-art shallow-water schemes: a first-order upwind scheme, a second-order upwind scheme, and a second-order central-upwind scheme. We analyze the advantages and disadvantages of each scheme on historical Danube river floods at three regions in Austria. We study the Lobau region as a floodplain with several small channels, the Wachau region with the meandering Danube in a steep valley, and the Marchfeld region located at the river confluence of March and Danube. The validation case studies show that the second-order schemes provide better estimates of the water levels than the first-order scheme. Still, the first order scheme is useful because it offers fast simulations and reasonable results at higher resolutions. The best trade-off between accuracy and computational effort for simulating river floods is provided by the second-order upwind scheme.

Link: Publisher's version - PDF file (2.9 MB)

Download: BibTeX citation

interactive visualization of flood and heavy rain simulations

Daniel Cornel, Andreas Buttinger-Kreuzhuber, Artem Konev, Zsolt Horváth, Michael Wimmer, Raimund Heidrich, Jürgen Waser

Interactive Visualization of Flood and Heavy Rain Simulations,

In Computer Graphics Forum (Proceedings EuroVis 2019), 38(3), pages 25-39, 2019. Best Paper Award.

Abstract: In this paper, we present a real-time technique to visualize large-scale adaptive height fields with C1-continuous surface reconstruction. Grid-based shallow water simulation is an indispensable tool for interactive flood management applications. Height fields defined on adaptive grids are often the only viable option to store and process the massive simulation data. Their visualization requires the reconstruction of a continuous surface from the spatially discrete simulation data. For regular grids, fast linear and cubic interpolation are commonly used for surface reconstruction. For adaptive grids, however, there exists no higher-order interpolation technique fast enough for interactive applications. Our proposed technique bridges the gap between fast linear and expensive higher-order interpolation for adaptive surface reconstruction. During reconstruction, no matter if regular or adaptive, discretization and interpolation artifacts can occur, which domain experts consider misleading and unaesthetic. We take into account boundary conditions to eliminate these artifacts, which include water climbing uphill, diving towards walls, and leaking through thin objects. We apply realistic water shading with visual cues for depth perception and add waves and foam synthesized from the simulation data to emphasize flow directions. The versatility and performance of our technique are demonstrated in various real-world scenarios. A survey conducted with domain experts of different backgrounds and concerned citizens proves the usefulness and effectiveness of our technique.

Download: PDF file (70.3 MB)

Download: Raw online survey results (32 KB)

Download: BibTeX citation

Download: EuroVis 2019 PowerPoint slides (425 MB)

master of disaster: virtual-reality response training in disaster management

Katharina Krösl, Harald Steinlechner, Johanna Donabauer, Daniel Cornel, Jürgen Waser

Master of Disaster: Virtual-Reality Response Training in Disaster Management,

In Proceedings of VRCAI 2019, the 17th International Conference on Virtual-Reality Continuum and Its Applications in Industry, article no. 49, 2 pages, 2019.

Abstract: To be prepared for flooding events, disaster response personnel has to be trained to execute developed action plans. We present a collaborative operator-trainee setup for a flood response training system, by connecting an interactive flood simulation with a VR client, that allows to steer the remote simulation from within the virtual environment, deploy protection measures, and evaluate results of different simulation runs. An operator supervises and assists the trainee from a linked desktop application.

Download: PDF file (4.7 MB)

Download: Poster (26.6 MB)

Download: BibTeX citation

3d annotations for geospatial decision support systems

Silvana Zechmeister, Daniel Cornel, Jürgen Waser

3D Annotations for Geospatial Decision Support Systems,

In Journal of WSCG, 27(2), pages 141-150, 2019.

Abstract: In virtual 3D environments, it is easy to lose orientation while navigating or changing the view with zooming and panning operations. In the real world, annotated maps are an established tool to orient oneself in large and unknown environments. The use of annotations and landmarks in traditional maps can also be transferred to virtual environments. But occlusions by three-dimensional structures have to be taken into account as well as performance considerations for an interactive real-time application. Furthermore, annotations should be discreetly integrated into the existing 3D environment and not distract the viewer’s attention from more important features. In this paper, we present an implementation of automatic annotations based on open data to improve the spatial orientation in the highly interactive and dynamic decision support system Visdom. We distinguish between line and area labels for object-specific labeling, which facilitates a direct association of the labels with their corresponding objects or regions. The final algorithm provides clearly visible, easily readable and dynamically adapting annotations with continuous levels of detail integrated into an interactive real-time application.

Download: PDF file (5.8 MB)

Download: BibTeX citation

fast cutaway visualization of sub-terrain tubular networks

Artem Konev, Manuel Matusich, Ivan Viola, Hendrik Schulze, Daniel Cornel, Jürgen Waser

Fast Cutaway Visualization of Sub-Terrain Tubular Networks,

In Computers & Graphics, 75, pages 25-35, 2018.

Abstract: This paper proposes a context-preserving 3D visualization technique for interactive view- and distance-dependent cutaway visualization that reveals the subsurface urban infrastructure network. The infrastructure itself is displayed using the procedural billboarding technique, and its internals are revealed through a new cutaway algorithm that operates directly on the procedurally generated structures in the billboard proxy geometry. Both described cutaway techniques achieve interactive frame rates for the infrastructural network of a mid-sized city. Performance benchmarks and a domain expert evaluation support the potential usefulness of this technique in general and its particular utilization for the sewer network visualization.

Download: PDF file (8.9 MB)

Download: BibTeX citation

forced random sampling

Daniel Cornel, Robert F. Tobler, Hiroyuki Sakai, Christian Luksch, Michael Wimmer

Forced Random Sampling: fast generation of importance-guided blue-noise samples,

In The Visual Computer, 33(6), pages 833-843, 2017.

Abstract: In computer graphics, stochastic sampling is frequently used to efficiently approximate complex functions and integrals. The error of approximation can be reduced by distributing samples according to an importance function, but cannot be eliminated completely. To avoid visible artifacts, sample distributions are sought to be random, but spatially uniform, which is called blue-noise sampling. The generation of unbiased, importance-guided blue-noise samples is expensive and not feasible for real-time applications. Sampling algorithms for these applications focus on runtime performance at the cost of having weak blue-noise properties. Blue-noise distributions have also been proposed for digital halftoning in the form of precomputed dither matrices. Ordered dithering with such matrices allows to distribute dots with blue-noise properties according to a grayscale image. By the nature of ordered dithering, this process can be parallelized easily. We introduce a novel sampling method called forced random sampling that is based on forced random dithering, a variant of ordered dithering with blue noise. By shifting the main computational effort into the generation of a precomputed dither matrix, our sampling method runs efficiently on GPUs and allows real-time importance sampling with blue noise for a finite number of samples. We demonstrate the quality of our method in two different rendering applications.

Link: Publisher's version

Download: Supplemental material - Source code, binaries, and dither matrices (17.3 MB)

Download: BibTeX citation

Download: CGI 2017 PowerPoint slides (19.2 MB)

Download: Author's accepted manuscript - PDF file (4.5 MB)

This is the author's version of the work. The final publication is available at Springer via https://dx.doi.org/10.1007/s00371-017-1392-7.

kepler shuffle for real-world flood simulations on gpus

Zsolt Horváth, Rui A. P. Perdigão, Jürgen Waser, Daniel Cornel, Artem Konev, Günter Blöschl

Kepler Shuffle for Real-World Flood Simulations on GPUs,

In The International Journal of High Performance Computing Applications, 30(4), pages 379-395, 2016.

Abstract: We present a new graphics processing unit implementation of two second-order numerical schemes of the shallow water equations on Cartesian grids. Previous implementations are not fast enough to evaluate multiple scenarios for a robust, uncertainty-aware decision support. To tackle this, we exploit the capabilities of the NVIDIA Kepler architecture. We implement a scheme developed by Kurganov and Petrova (KP07) and a newer, improved version by Horváth et al. (HWP14). The KP07 scheme is simpler but suffers from incorrect high velocities along the wet/dry boundaries, resulting in small time steps and long simulation runtimes. The HWP14 scheme resolves this problem but comprises a more complex algorithm. Previous work has shown that HWP14 has the potential to outperform KP07, but no practical implementation has been provided. The novel shuffle-based implementation of HWP14 presented here takes advantage of its accuracy and performance capabilities for real-world usage. The correctness and performance are validated on real-world scenarios.

Download: PDF file (3.1 MB)

Download: BibTeX citation

composite flow maps

Daniel Cornel, Artem Konev, Bernhard Sadransky, Zsolt Horváth, Andrea Brambilla, Ivan Viola, Jürgen Waser

Composite Flow Maps,

In Computer Graphics Forum (Proceedings EuroVis 2016), 35(3), pages 461-470, 2016.

Abstract: Flow maps are widely used to provide an overview of geospatial transportation data. Existing solutions lack the support for the interactive exploration of multiple flow components at once. Flow components are given by different materials being transported, different flow directions, or by the need for comparing alternative scenarios. In this paper, we combine flows as individual ribbons in one composite flow map. The presented approach can handle an arbitrary number of sources and sinks. To avoid visual clutter, we simplify our flow maps based on a force-driven algorithm, accounting for restrictions with respect to application semantics. The goal is to preserve important characteristics of the geospatial context. This feature also enables us to highlight relevant spatial information on top of the flow map such as traffic conditions or accessibility. The flow map is computed on the basis of flows between zones. We describe a method for auto-deriving zones from geospatial data according to application requirements. We demonstrate the method in real-world applications, including transportation logistics, evacuation procedures, and water simulation. Our results are evaluated with experts from corresponding fields.

Download: PDF file (15.0 MB)

Download: BibTeX citation

Download: EuroVis 2016 PowerPoint slides (203 MB)

visualization of object-centered vulnerability to possible flood hazards

Daniel Cornel, Artem Konev, Bernhard Sadransky, Zsolt Horváth, Eduard Gröller, Jürgen Waser

Visualization of Object-Centered Vulnerability to Possible Flood Hazards,

In Computer Graphics Forum (Proceedings EuroVis 2015), 34(3), pages 331-341, 2015. Best Paper Award, 3rd place.

Abstract: As flood events tend to happen more frequently, there is a growing demand for understanding the vulnerability of infrastructure to flood-related hazards. Such demand exists both for flood management personnel and the general public. Modern software tools are capable of generating uncertainty-aware flood predictions. However, the information addressing individual objects is incomplete, scattered, and hard to extract. In this paper, we address vulnerability to flood-related hazards focusing on a specific building. Our approach is based on the automatic extraction of relevant information from a large collection of pre-simulated flooding events, called a scenario pool. From this pool, we generate uncertainty-aware visualizations conveying the vulnerability of the building of interest to different kinds of flooding events. On the one hand, we display the adverse effects of the disaster on a detailed level, ranging from damage inflicted on the building facades or cellars to the accessibility of the important infrastructure in the vicinity. On the other hand, we provide visual indications of the events to which the building of interest is vulnerable in particular. Our visual encodings are displayed in the context of urban 3D renderings to establish an intuitive relation between geospatial and abstract information. We combine all the visualizations in a lightweight interface that enables the user to study the impacts and vulnerabilities of interest and explore the scenarios of choice. We evaluate our solution with experts involved in flood management and public communication.

Download: PDF file (14.3 MB)

Download: BibTeX citation

Download: EuroVis 2015 PowerPoint slides (55.7 MB)

run watchers: automatic simulation-based decision support in flood management

Artem Konev, Jürgen Waser, Bernhard Sadransky, Daniel Cornel, Rui A. P. Perdigão, Zsolt Horváth, M. Eduard Gröller

Run Watchers: Automatic Simulation-Based Decision Support in Flood Management,

In IEEE Transactions on Visualization and Computer Graphics (Proceedings IEEE VAST), 20(12), pages 1873-1882, 2014.

Abstract: In this paper, we introduce a simulation-based approach to design protection plans for flood events. Existing solutions require a lot of computation time for an exhaustive search, or demand for a time-consuming expert supervision and steering. We present a faster alternative based on the automated control of multiple parallel simulation runs. Run Watchers are dedicated system components authorized to monitor simulation runs, terminate them, and start new runs originating from existing ones according to domain-specific rules. This approach allows for a more efficient traversal of the search space and overall performance improvements due to a re-use of simulated states and early termination of failed runs. In the course of search, Run Watchers generate large and complex decision trees. We visualize the entire set of decisions made by Run Watchers using interactive, clustered timelines. In addition, we present visualizations to explain the resulting response plans. Run Watchers automatically generate storyboards to convey plan details and to justify the underlying decisions, including those which leave particular buildings unprotected. We evaluate our solution with domain experts.

Download: PDF file (1.0 MB)

Download: BibTeX citation

analysis of forced random sampling

Daniel Cornel

Analysis of Forced Random Sampling,

Master's thesis, TU Wien, Institute of Computer Graphics and Algorithms, Vienna, Austria, 85 pages, 2014.

Abstract: Stochastic sampling is an indispensable tool in computer graphics which allows approximating complex functions and integrals in finite time. Applications which rely on stochastic sampling include ray tracing, remeshing, stippling and texture synthesis. In order to cover the sample domain evenly and without regular patterns, the sample distribution has to guarantee spatial uniformity without regularity and is said to have blue-noise properties. Additionally, the samples need to be distributed according to an importance function such that the sample distribution satisfies a given sampling probability density function globally while being well distributed locally. The generation of optimal blue-noise sample distributions is expensive, which is why a lot of effort has been devoted to finding fast approximate blue-noise sampling algorithms. Most of these algorithms, however, are either not applicable in real time or have weak blue-noise properties.

Forced Random Sampling is a novel algorithm for real-time importance sampling. Samples are generated by thresholding a precomputed dither matrix with the importance function. By the design of the matrix, the sample points show desirable local distribution properties and are adapted to the given importance. In this thesis, an efficient and parallelizable implementation of this algorithm is proposed and analyzed regarding its sample distribution quality and runtime performance. The results are compared to both the qualitative optimum of blue-noise sampling and the state of the art of real-time importance sampling, which is Hierarchical Sample Warping. With this comparison, it is investigated whether Forced Random Sampling is competitive with current sampling algorithms.

The analysis of sample distributions includes several discrepancy measures and the sample density to evaluate their spatial properties as well as Fourier and differential domain analyses to evaluate their spectral properties. With these established methods, it is shown that Forced Random Sampling generates samples with approximate blue-noise properties in real time. Compared to the state of the art, the proposed algorithm is able to generate samples of higher quality with less computational effort and is therefore a valid alternative to current importance sampling algorithms.

Download: PDF file (8.4 MB)

imprint

This website is maintained by ![]()

Carrier pigeon: No longer accepted due to repeated abuse.

drivenbynostalgia.com is a private, non-commercial website intended for self-archival of scientific work. All content on this website is published under the right to freedom of speech as declared in the Universal Declaration of Human Rights and is to be interpreted as my opinion at a certain point of time or the opinion of a third-party if denoted. Hence I do not claim correctness or harmlessness of any of the content provided on this website.

This website contains links to third-party websites of which content I cannot control or can be held liable for. I added these links at a certain time when the linked websites did not seem to contain any offensive or illegal content. I do not permanently check all linked websites for availability and content changes, so please inform me if a link has to be changed or removed.

All compiled and source code files provided on this website are provided as is and without any guarantee of executability, safety or security. They are published in a publicly available private archive and are to be interpreted as coding suggestions and should not be used without an examination of the code - as should nothing you download from the internet. Several applications depend on third-party libraries which might be included in the downloads. Please consider the respective licences and copyright regulations.

If not denoted otherwise in the code files itself, all of my code published on this website is published under the BSD 3-clause license!